A Chatbot Story – How We Built a Comprehensive Onboarding Assistant for a Leading Research University

How Chat Bots Can Enhance Student Onboarding

Conversational interfaces have gone mainstream. The technology behind keeps crossing new milestones, the result of which chatbots have transformed from simple Q&A systems to intelligent personal assistants. As a result, bots found widespread application in diverse areas, most recently in education.

Although education stayed backward in terms of technology adoption, lately it took on a renewed quest to incorporate it. Educators are on the lookout for innovative ed-tech systems for efficient tutoring and students increasingly prefer personalized learning environments.

Deploying chatbots at numerous front-ends like college/university websites, internal student communication portals or even popular instant messaging platforms can help with that. Here’s how?

Chatbots bring in a personalized and engaging learning experience optimized to the learning pace of each learner, which actively drives student-centered learning at the forefront. Configuring a bot to answer student inquiries related to curriculum, courses, admissions, etc. as well as deliver learning resources on request makes way for a personal always available assistant that every student can engage with.

That’s exactly what we did, though in a different way.

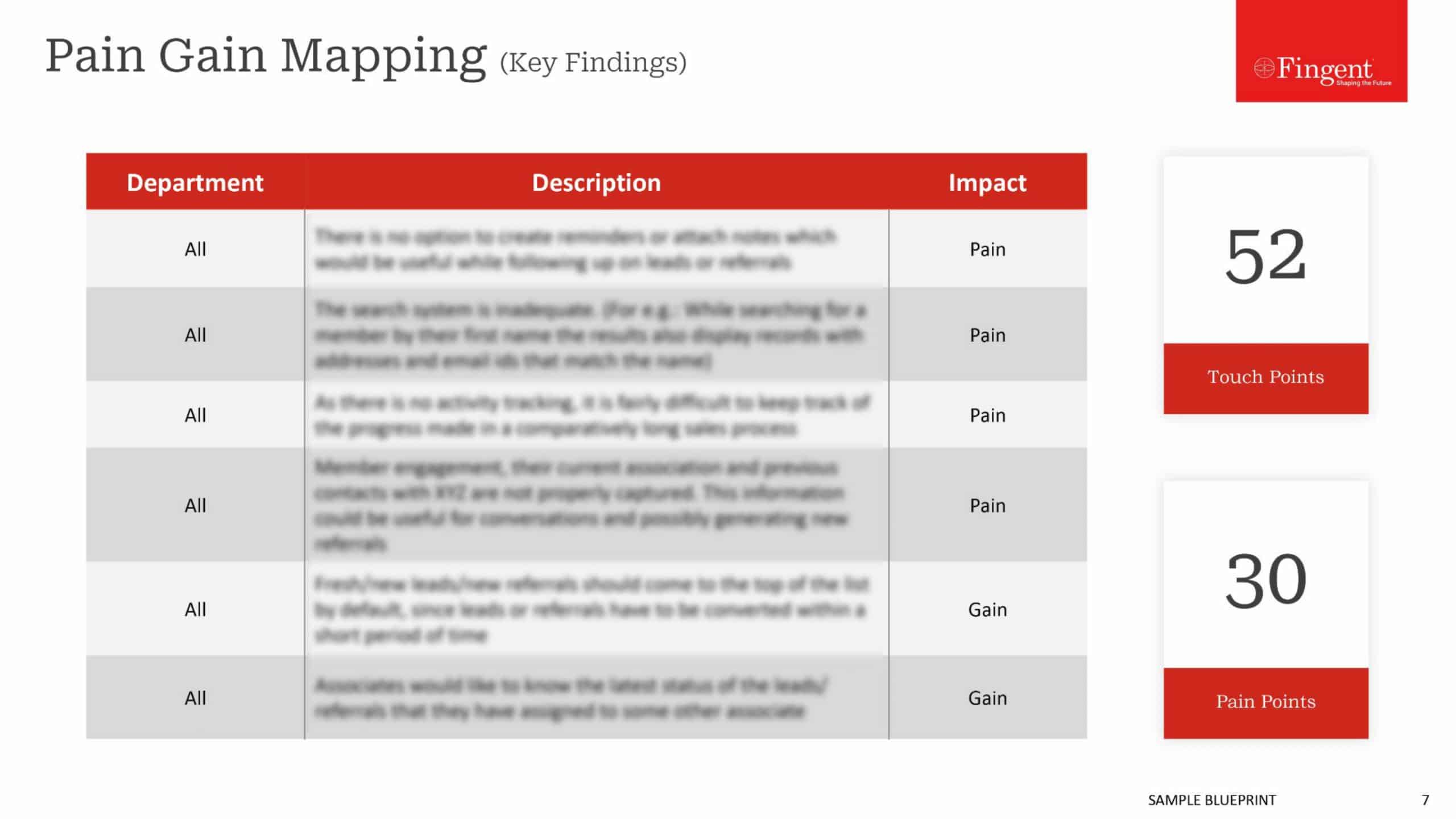

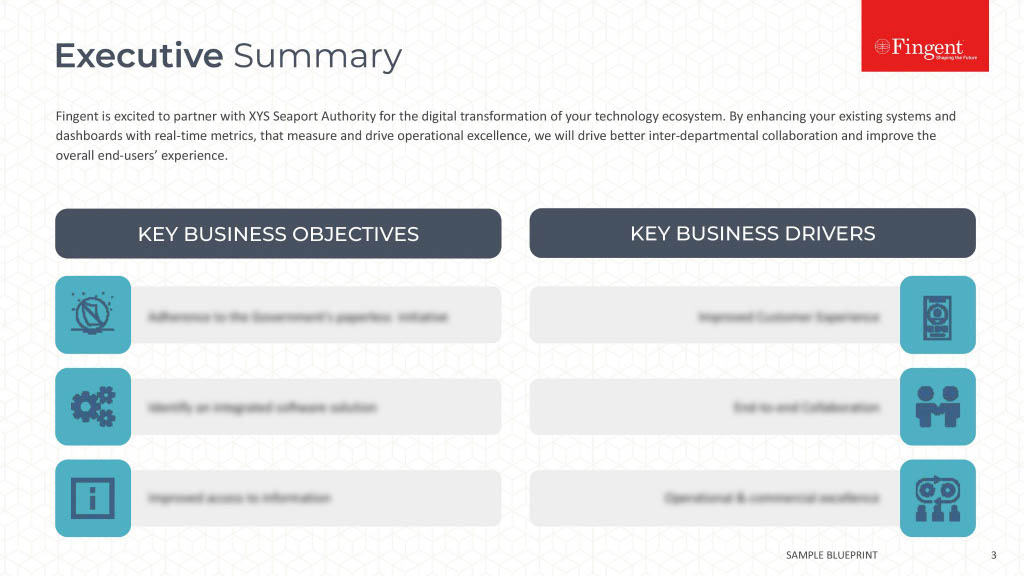

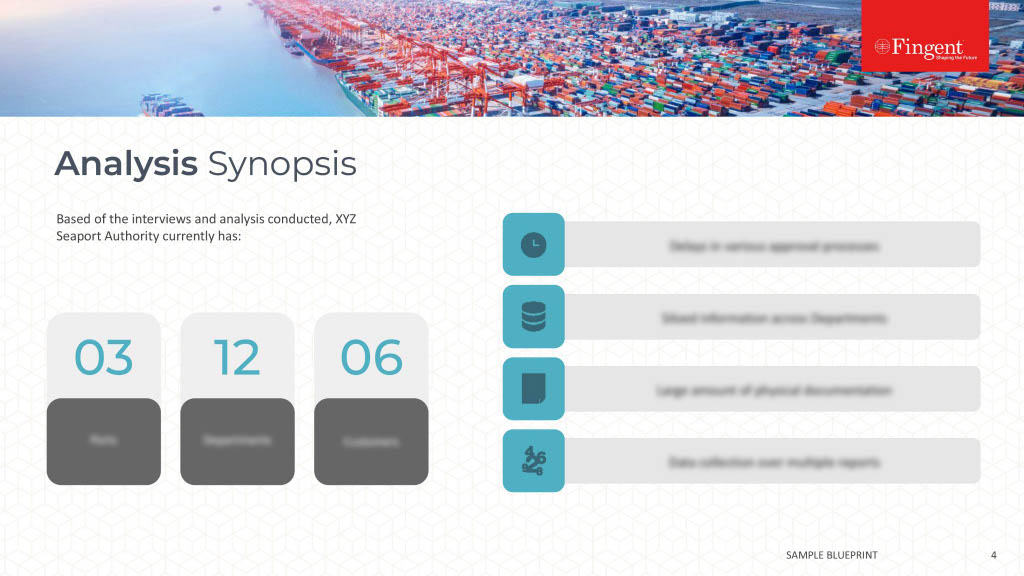

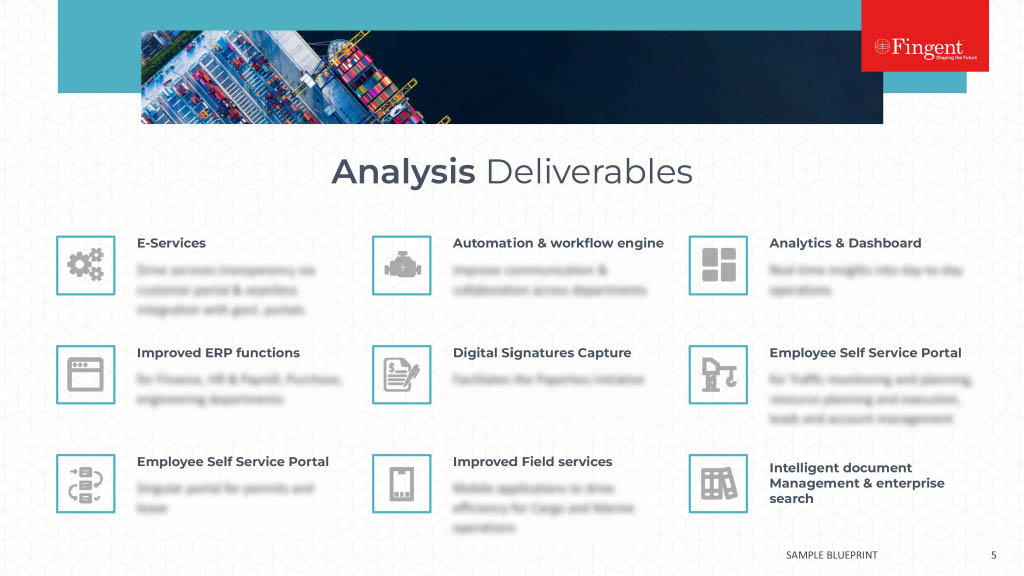

Recently Fingent was approached by a client, a leading public research university based in Australia to develop an intelligent chatbot for assisting prospective and freshly enrolled candidates with onboarding and orientation, course-related information, credit scores, etc. The client wanted to streamline its entire student orientation process using a chatbot and make all related information better accessible and context-based as well as systematically tackle the ‘summer melt’ rates.

Here, we lay down a high-level abstract of this chatbot development experience powered by IBM Watson Assistant and backed by .NET Core. It briefs various facets and challenges faced during the design and development of the system.

The Plot (Objective)

“Build a chatbot to assist candidates during the orientation process of Monash University. The chatbot should be capable of handling different context-based scenarios such as listing available courses, providing credit score information, course structure, projects associated with each course and many more.“

Since it is a Proof of Concept (POC) project, and Monash University offers a wide range of courses based on various areas of education, the team decided to choose one particular area and focus on only two of the selected courses (Bachelor of Accounting & Bachelor of Actuarial Science). This is to repress the scope in control, considering the timespan and resource availability.

Foundation

Keeping in mind the idea of building a highly sophisticated chatbot, an ideal and matured chatbot assistant technology had to be finalized, which provides both comprehensive user intent identification and processing as well as a satisfactory response according to the user query. The system should also provide an extensive and less technicality included training interface. The hunt for such a tech ended up in IBM Watson Assistant.

Terminologies

The world of chatbots has some common terms which are essential key knowledge required while developing a chatbot. We can call them as the pillars of a chatbot.

- #Intent – Intent is nothing but the user’s intention in a query – basically covers all types of questions and their varieties, the user probably may ask. This can be queries within the scope or related to the scope.

Examples:

“What are all the courses available?”

– Intent associated: #KnowCourseInfo

“How much credit I require in the first semester?”

– Intent associated: #KnowCreditInfo

Remarks – There will be some stock #intent collection depending upon the chatbot engine, which is designed to handle the general greetings and conversation-oriented chunks. We can import or enable the intents as we want to make our chatbot more conversational and human-friendly.

- @Entity – An entity is a subject addressed in the user query. There are mainly two categories of entities. They are Scope-based entities and System entities. Scope-based entities are entities that belong to the scope we address whereas System entities are “primitive system-aware” entities.

Examples:

“What are all the courses available?”

– Entities associated: @Course

“How much credit I require in the 1st semester?”

– Entities associated: @Credit, @Semester, @system_number:1st

Remarks – On diving deeper, we may need the support of multiple types of scope-based entities and a system-aware way of specifying the relationship between the entities (which lacks in IBM Watson Assistant). This is to specify the entity characteristics as more descriptive as well as with the notion of “the system knows” the given attributes and relationships of an entity.

- Dialog – A dialog is a declarative way of specifying the possible questions the user may ask, and how should the bot respond to the corresponding questions. Generally, this will be a tree-based structure, rooted in the key user intentions and scope covered features. We will be handling the different scenarios of a single #intent as well as the edge cases.

- $Context Variable – A context variable is to store information, collecting from a dialog context or it can be any information related to the dialog context. It helps us to keep the dialogue context and facilitates conversational flow.

- Skill/Workspace (IBM Watson based) – A skill is a package that consists of the above-mentioned factors, in which all are aligned into a single chatbot capability, in our case, it was Onboarding skill.

Implementation

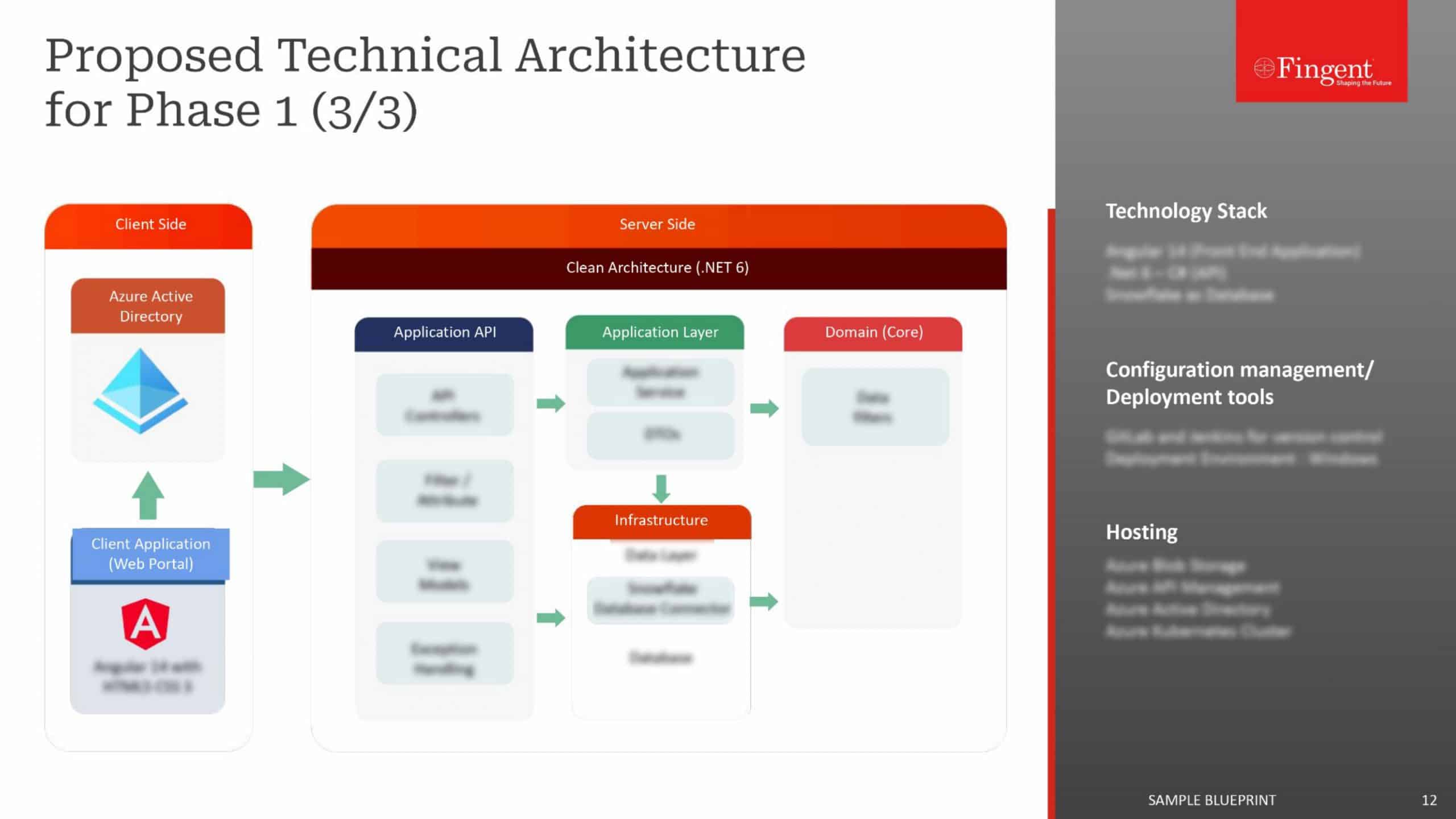

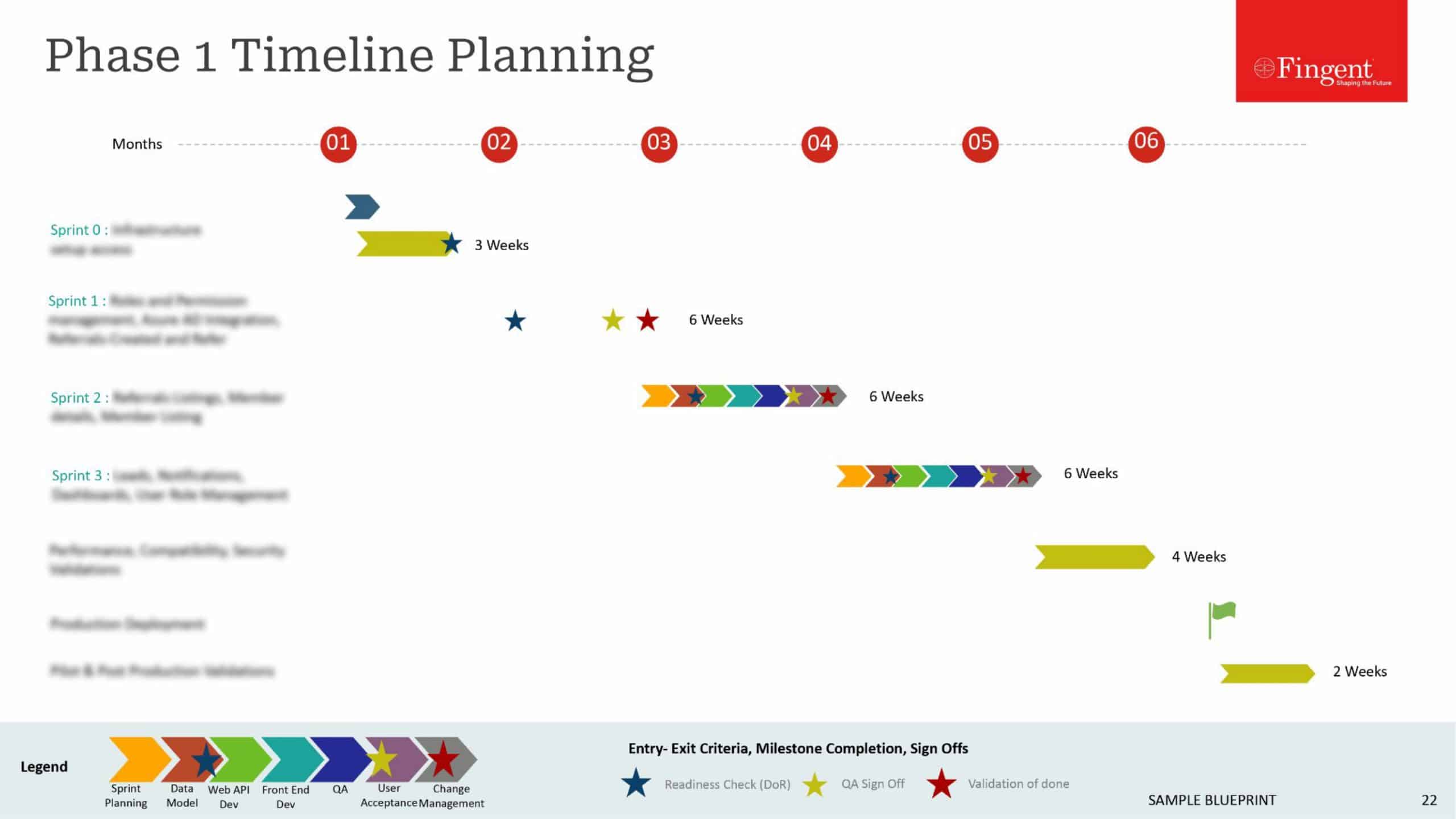

The entire development process streamlined into two major sections. The first one is aligned to the chatbot engine intelligence building and improvement activities while the other one is for the middleware and UI development.

1. Intelligence Build-up on top of IBM Watson Assistant

- Analyzed the requirements and fixed the boundaries of the scope. It includes what all are the functional areas to be covered by the proposed chatbots.

- Prepared the possible user queries and categorized them as #intents.

- Identified the underlying @entities in each question and classified them to form the actual set of primitive entities.

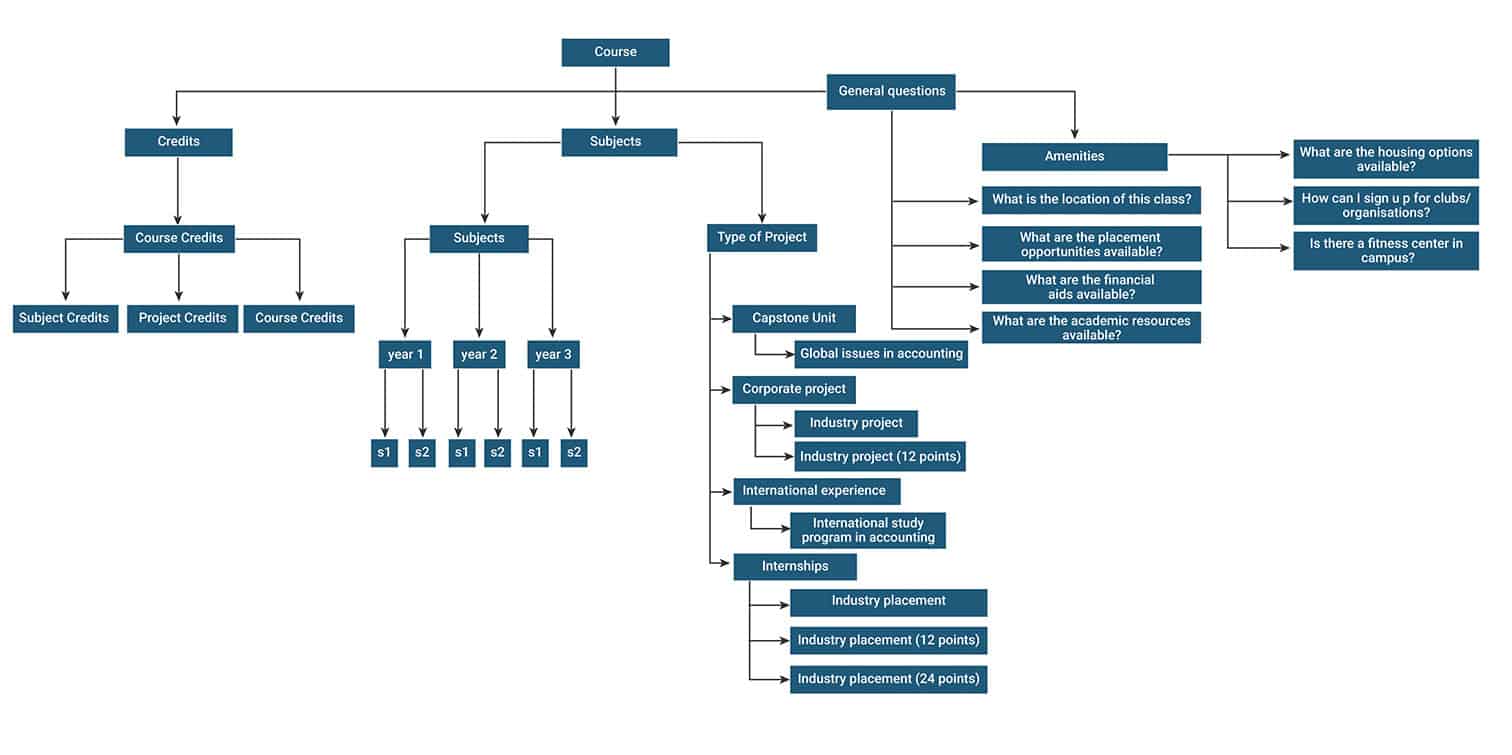

- Designed the dialog structure based on the prepared user query sets. See the resources: Intent structure and Dialogue flow.

Fig 1. Intent Structure

- Continuously refined the dialogue structure based on detecting each edge cases and to incorporate new scenarios.

- Used some conventions on responses to extend the chatbot response capabilities, according to the requirements. This is to handle specific use cases such as clickable action list image response, map response, and show a list of items.

- Implemented WebHooks (IBM Watson based) to talk to external APIs to fetch the values for a dialogue node as well as validating user input (Not a comprehensive solution).

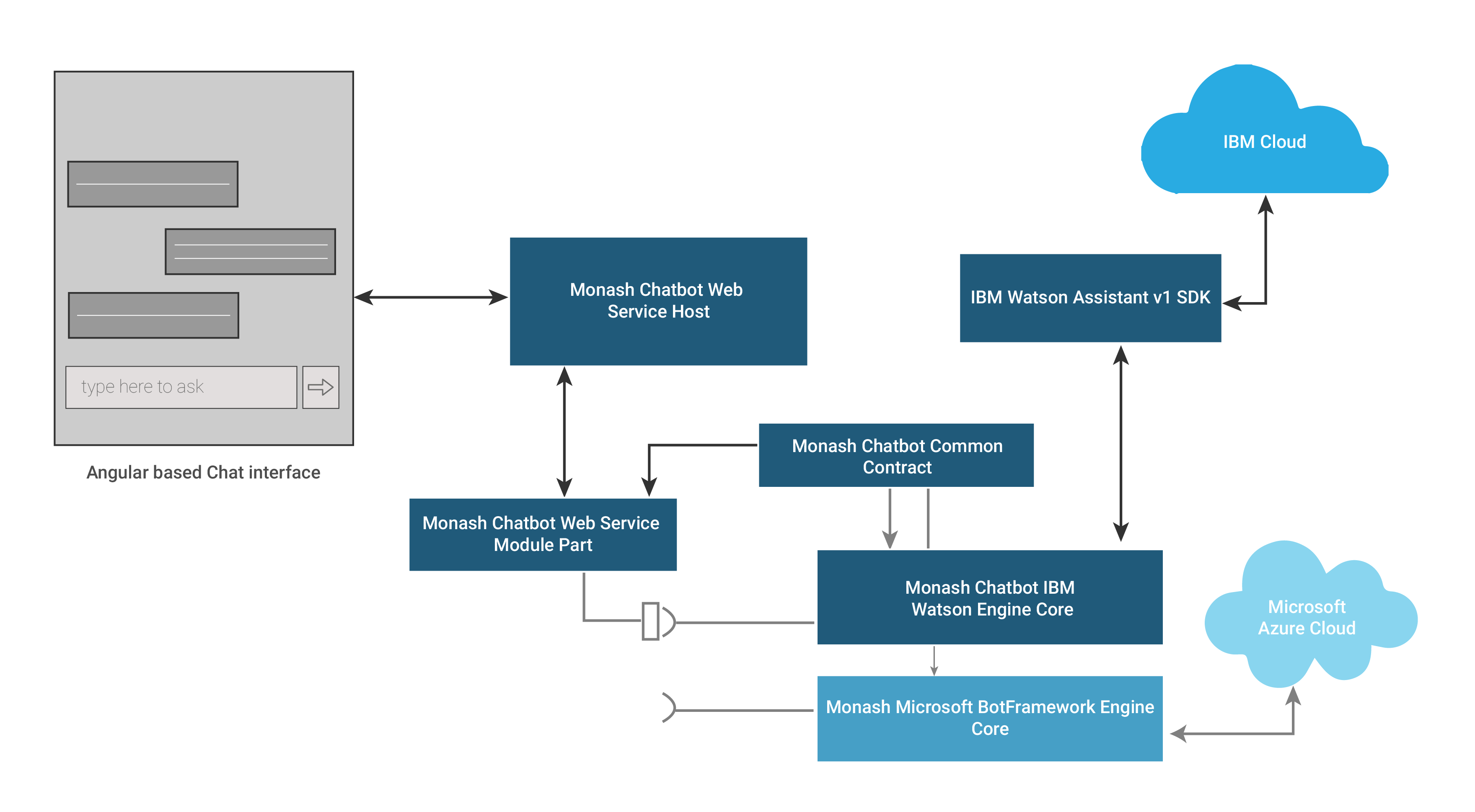

2. Middleware and UI Development

- Built a middleware backed by .NET Core with an intention to plug any chatbot service to the UI module. In fact, it is designed as a standard-framework to separate the chatbot logic from the application logic. This enables hassle-free maintenance of the app logic, code reusability, and extensibility.

- Built the UI using Angular to provide a sophisticated face for our chatbots.

Fig 2. Dayton Interface

Also, we built a diagnostics module, as part of the UI, which provides the service configuration information and session-based transcripts of conversations held with the chatbot.

Fig 3. The architecture of the Chatbot Middleware Application, Source Code

Challenges

During the development, we came across some development challenges with IBM Watson, which are listed below.

- Unable to map relationships between entities. Due to this limitation, we were unable to link and pull the related values of the entities.

- Conflicts between various entity values (Solved partially via entity split-up method)

- API Limitations to manage chatbots dialog schema

- IBM Watson doesn’t provide active learning, the self-learning capability to learn from user conversation sessions.

- It also doesn’t provide an efficient way to talk to external APIs. Only one external API can be called, which leads to a bottle-neck on executing the webhook actions.

- No built-in user input validation. This has to be done via WebHooks.

Capitalizing on AI Chatbots Will Redefine Your Business: Here’s How

Final Words

The application is now in a showcase/UAT (User Acceptance Testing) mode, also the refinement process being in progress. It has miles to go to reach the capability to converse with the user as a comprehensive onboarding assistant.

To know how chatbots can enhance your business growth, get in touch with our software development experts today!

Stay up to date on what's new

Recommended Posts

16 Apr 2026 B2B

Custom Enterprise Workflow Automation Software: Eliminating Delays, Driving Measurable ROI

This is the reality of many enterprises today: A request is created. Someone sends an email. Another person updates a spreadsheet. Someone copies the data into a CRM. Then the……

09 Apr 2026 B2B

Why Your Organization’s AI Initiatives Fail Without Intelligent Integration Architecture

Most enterprise leaders have experienced this—the initial excitement of AI giving way to a high-stakes question: When will I see the returns? This state of "pilot purgatory”, high investment with……

01 Apr 2026 B2B

The Ultimate Guide to Agentic AI Platforms in 2026

Gartner predicts that Agentic AI will autonomously resolve 80% of standard customer service issues by 2029, without human intervention. For market leaders, mastering Agentic AI is no longer optional—it is……

27 Mar 2026 B2B

AI Integration for Legacy Systems Without Rewriting Everything

Legacy systems do not just support the enterprise. They run it. They move money, manage care, track inventory, and process millions of transactions with precision. The issue is not reliability.……

Featured Blogs

Stay up to date on

what's new