Tag: Machine Learning

The automobile industry is one of the largest sectors in the world. In just the U.S., the car & automobile manufacturing industry boasts a market size of $104.1 billion.

However, even an ecosystem as large as the automotive industry is not immune to the unprecedented challenges over the last few years. The coronavirus pandemic and subsequent semiconductor chip shortage forced manufacturers to cut 11.3 million vehicles from production in 2021.

In addition to supply chain challenges, automotive manufacturers have contended with labor shortages, shifts in consumer demand, and pressures to create a sustainable work environment.

But, like any resilient industry, the automotive sector has leaned into these new challenges and has begun to address them proactively. Let’s take a closer look at what these hurdles entail and, more importantly, how automotive businesses overcome them via technology’s strategic implementation.

Challenges and Trends Reshaping the Automotive Industry

While many challenges and trends prompt the automotive industry to evolve, three stand out above the rest. These roadblocks include:

1. Ongoing Worker Shortages

Like many other business verticals, the automotive industry has been plagued by worker shortages. Manufacturers need help to fill vacancies at every level of the organization, including line-level staff, decision-makers, and engineers.

This worker shortage has made it nearly impossible to rebuild supply and catch up to runaway consumer demand for new vehicles.

2. Supply Chain Disruptions

Various legs of the automotive supply chain have faced disruptions over the last few years. Of these, the shortfall of semiconductor chips had the most significant impact on production and vehicle inventory.

Unfortunately, many experts predict the shortage will continue well into 2023, if not beyond. It is too late for automakers to prepare for this extended chip shortage. All they can do now is adjust manufacturing strategies to align with consumer demand and cut back production on less popular vehicles.

3. The EV Revolution

Despite these other concerns, the electric vehicle (EV) market continues to grow. By Q4 of 2022, EV sales represented 5.6% of all auto transactions. This percentage doubled from the year prior when EV sales made up just 2.7% of the total auto market.

This statistic demonstrates that consumers are becoming more environmentally conscious and are interested in decreasing their impact on natural resources. Government incentives and tax credits are further contributing to the surging popularity of electric vehicles.

But what does all this have to do with the future of work in the automotive industry? It means that automakers will need to implement new and more sophisticated production processes and hire better talent if they hope to push the envelope in the EV space.

The New Industry Focus: Creating a Sustainable Work Environment

One of the biggest drivers of change in the automotive industry is a global push toward creating a sustainable work environment.

Historically, the automotive sector has been anything but sustainable. Traditional assembly line-based production strategies focus on efficiency at the expense of almost anything else. These tactics result in the consumption of excessive amounts of power and often produce an unnecessary amount of resource waste.

However, the next-generation automotive industry will likely be unrecognizable to the pioneers of the last century. Modern manufacturers are reimagining every aspect of the supply chain, from material sourcing to assembly and distribution. Visionaries and thought leaders are also encouraging a shift away from old-school engineering and development processes in favor of AI-powered practices prioritizing efficiency.

Even the retail sales aspect of the automotive industry is changing. Many dealers are shifting toward online transactions, and some are transitioning increasingly to a made-to-order sales model. The end result is a more agile and less wasteful automotive supply chain.

Technologies that Can Fuel the Auto Sector’s Metamorphosis

The future of work in the automotive industry will focus on sustainability, resilience, and agility while prioritizing efficiency. To realize their aspirations of a sustainable work environment,

industry executives, managers, and workers must embrace leading-edge technologies, including:

1. Predictive Analytics Software

Predictive analytics software will influence numerous aspects of the automotive industry. Organizations interested in forging sustainable work environments can use these analytics tools to identify production waste and increase operational efficiency. Additionally, they can leverage these solutions to create more energy-efficient vehicles that produce fewer greenhouse gasses.

Predictive analytics technologies will also assist with demand forecasting — organizational leaders can use these insights to prioritize in-demand vehicles as they contend with ongoing chip shortages.

2. Automation Tools

Automation tools will prove invaluable amid labor and talent shortages. Businesses in the automotive industry can use automation software to streamline redundant back-office processes and improve communication across the entire supply chain.

Manufacturers can also use automation tools to ramp up production while conserving energy and reducing waste.

3. Machine Learning and AI Solutions

Machine learning and artificial intelligence technologies can transform every link in the automotive industry supply chain. Businesses can use these complementary technologies to optimize raw material sourcing, vehicle distribution, and production.

Because they allow for a more data-driven approach to manufacturing and sales, these technologies can reduce waste while simultaneously creating more agile and resilient supply chains. In turn, this will help keep the costs of vehicles manageable, thereby increasing accessibility to energy-efficient automobiles and EVs.

Read more: AI and ML for Faster and Accurate Project Cost Estimation

Accelerate Your Transformation with Fingent

When your business is in the automotive industry, creating a sustainable work environment should be one of your top priorities. Doing so will help you attract and retain top talent, meet consumer demand for more efficient vehicles, and align your business model with the latest regulations and compliance frameworks.

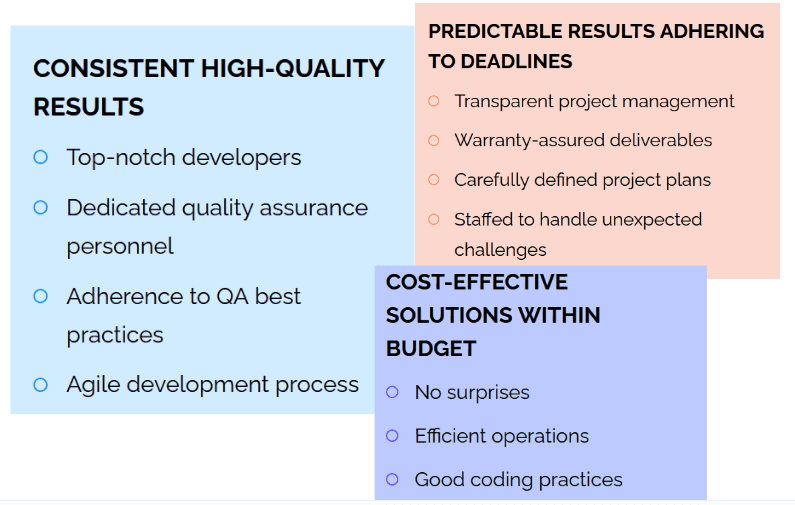

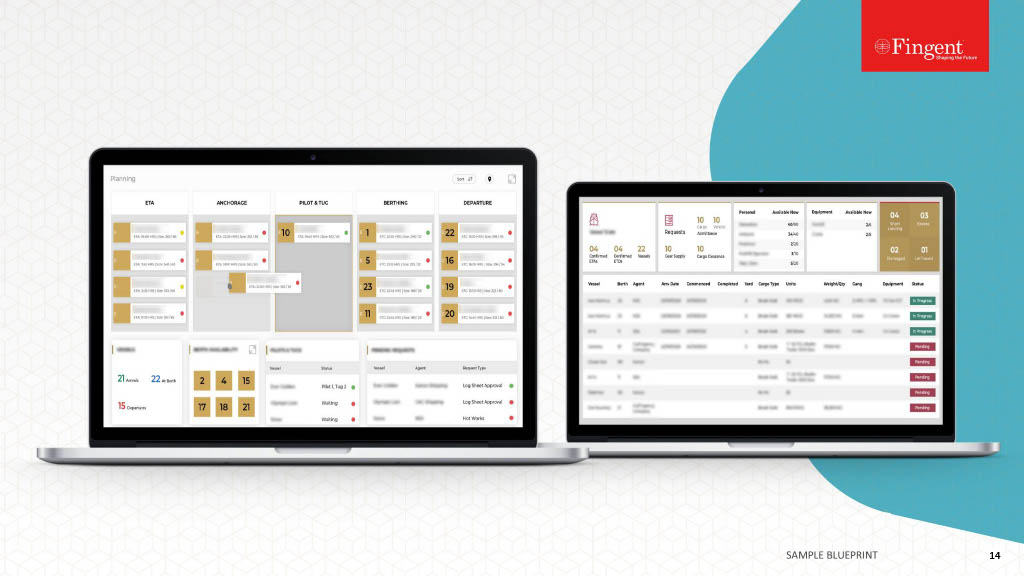

To achieve these goals, you will need access to purpose-built technologies designed for your business’s unique needs. That’s where Fingent top custom software development company, can help.

Our development experts can create dynamic software for your business. From customer-facing applications to internal solutions that empower your staff to be more productive, we build the software you need to thrive.

To learn more about our wide range of technology development services, connect with Fingent today.

Stay up to date on what's new

Featured Blogs

Stay up to date on

what's new

Talk To Our Experts

No longer the stuff of science fiction, artificial intelligence (AI) and machine learning (ML) are revolutionizing the way customers interact with brands. Businesses that have embraced these technologies can reshape the customer experience, curate one-of-a-kind buyer journeys, and strengthen bonds with their target audiences.

As your organization works to remain competitive in the modern business ecosystem, it must tap into the power of AI and ML technologies to provide a superior customer experience.

How Are AI and ML Enhancing Customer Experience?

Artificial intelligence and machine learning solutions can profoundly impact every facet of the customer experience. By leveraging these technologies, your business can:

1. Facilitate Hyper-Personalization

Customers who interact with your brand are looking for a personalized experience. As such, brands that put their products and services at the center of attention instead of prioritizing experience will miss the mark. Likewise, blasting your customers with generic advertising content or sending them broad, basic messages simply won’t cut it anymore. Instead, you must personalize every interaction to deliver timely and relevant content to each user.

Artificial intelligence and machine learning technologies facilitate a level of hyper-personalization that was thought to be unachievable just a few years ago. In a 2022 Salesforce survey, 88% of consumers reported that an experience provided by a company is almost as important as the product. Using AI and ML technologies, you can personalize customer experiences by utilizing real-time data, like their browsing history, purchasing habits, etc.

Artificial intelligence and machine learning solutions can also eliminate friction from the customer journey. For instance, AI- and ML-powered chatbots can leverage information from past interactions to create personalized messages for each consumer. This will minimize customer frustration by reducing how often consumers are asked to repeat information they have previously provided.

2. Allow Customers to Stay Connected 24/7

Customers expect access to timely and relevant support around the clock. However, staffing your customer support department 24/7 is financially infeasible. So how do you bridge the gap between customer expectations and the fiscal limitations of your business? AI and ML solutions are the clear answer.

With artificial intelligence and machine learning technologies, you can provide your customers with access to automated support like chatbots. These bots can respond immediately to customers and resolve many basic product- or service-related issues without tying up your customer support staff. This capability will not only allow you to reduce the workload on your team but also help you provide more timely and omnichannel service to customers, no matter when they reach out for assistance.

3. Conduct Predictive Behavior Analyses

The sooner you can identify consumer behavior trends, the better your chances of capitalizing on emerging opportunities. Unfortunately, traditional analytics solutions do not facilitate real-time decision-making because they often rely on data that is days (or even weeks) old.

The good news is that artificial intelligence and machine learning technologies enable you to conduct predictive behavioral analyses using real-time data, guiding your decision-making processes and enabling you to adapt to emerging trends like never before.

4. Enhance Your Understanding of Target Audiences

Artificial intelligence and machine learning technologies allow you to step into your target audience’s mind. You can use these newfound insights to guide your digital marketing strategies, refine products and services, and enhance the customer experience.

Due to how AI and ML learn and evolve, these technologies will only become more effective over time as they get access to more data, better helping you anticipate how your target audiences are likely to behave in the future. This enables you to proactively eliminate friction points from the buyer’s journey and paves the way for increased sales and better profitability.

Read more: Is AI-powered mobile app what you need for your business now?

Use Cases: Major Industries that Have Embraced AI and ML

Artificial intelligence and machine learning technologies are going mainstream, and many industries are taking advantage of these powerful tools for both B2C and B2B interactions. Business leaders in these sectors understand that these technologies will significantly impact their organizations’ ability to compete, both now and in the future.

Some of the industries that are using AI and ML technologies on a broad scale include:

- Software development

- Language processing and transcription

- Retail

- Customer service

- Marketing

- Manufacturing

- Finance

- Agriculture

- Logistics and transportation

- Healthcare

The healthcare and logistics sectors were some of the earliest adopters of artificial intelligence and machine learning technologies, whether by predicting the likelihood of patients developing certain diseases or by providing customers with more accurate shipping estimates. These industries (and every other on this list) utilize AI and ML technologies to enhance the customer experience.

These technologies also provide meaningful insights into the efficiency of business operations. Organizational leaders can use the information gleaned from these technologies to proactively address critical organizational growth hurdles and promote business continuity.

How Your Business Can Optimize Customer Experience with AI and ML

Artificial intelligence and machine learning technologies will empower your business to revolutionize the customer experience along every meaningful touchpoint. First and foremost, these technologies will help your business truly understand the customer journey and its impact on organizational profitability. And once you understand the state of your business and how well it is currently managing the customer experience, you can begin using your AI and ML tools to refine the customer experience.

If you want to maximize your return on investment, consider incorporating artificial intelligence and machine learning technologies into as many business processes as possible. You can use these solutions to automate redundant processes, hyper-personalize advertising content, and refine the customer experience from top to bottom.

Read more: Use cases and business benefits of deploying Machine Learning!

Tap into the Power of AI and ML with Fingent

Are you ready to harness the power of artificial intelligence and machine learning so that you can provide your clients with the experience they deserve? If so, then it is time to explore a partnership with Fingent.

At Fingent top custom software development company, we provide customized artificial intelligence applications and machine learning solutions. Cumulatively, these technologies will differentiate your brand in the competitive digital marketplace and enable you to modernize the customer experience.

To learn more about Fingent’s suite of services and solutions, contact our team today. Together, we can reshape your customer experience and set the stage for the growth of your business.

Stay up to date on what's new

Featured Blogs

Stay up to date on

what's new

Talk To Our Experts

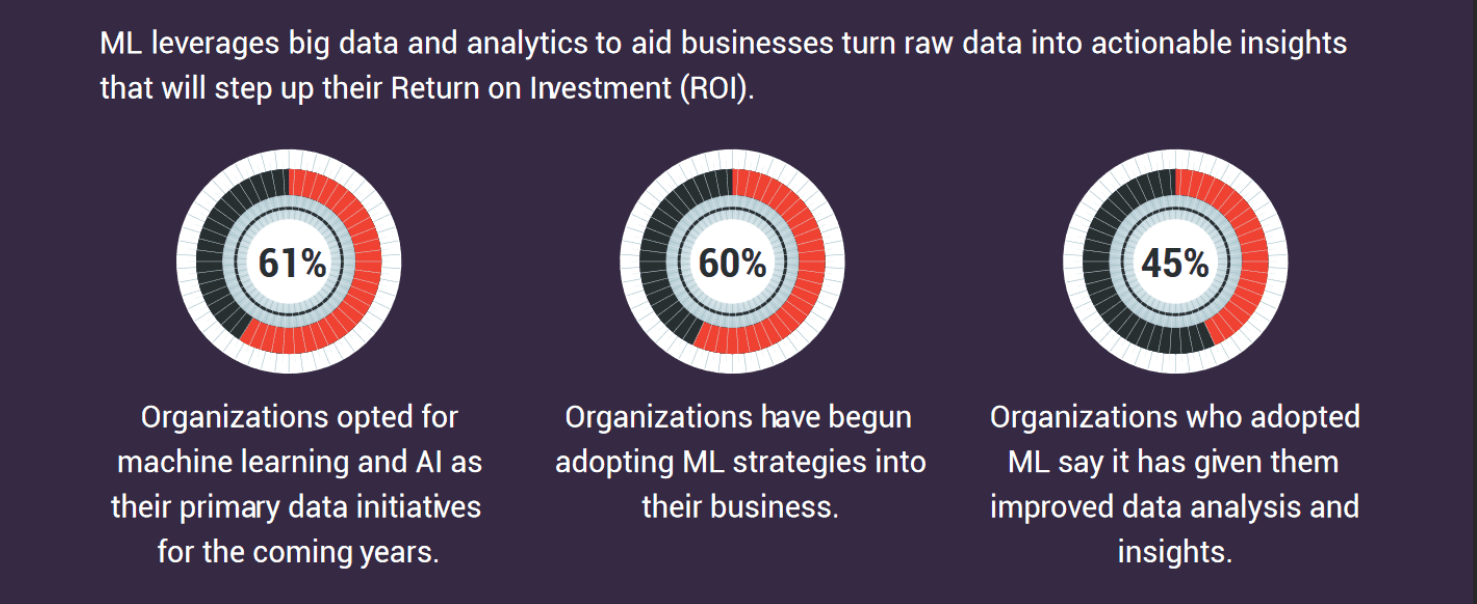

Machine learning is changing the face of everyday life, science, and business. It is revolutionizing all industries, from advancing medicine to powering various cutting-edge technologies. Though Machine learning (ML) was a part of AI’s evolution until the 1970s, it evolved independently. It has become a chief response tool for cloud computing and eCommerce.

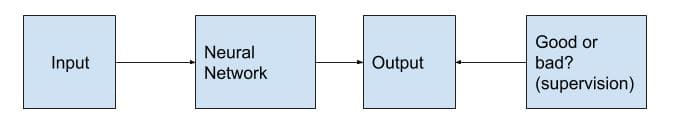

The goal of machine learning in business is to adapt to new data independently and make decisions and recommendations based on thousands of analyses. Machine learning enables systems to learn, identify patterns and make informed decisions with minimal human intervention.

Today, ML is a necessary aspect of modern business. It uses algorithms and neural network models to improve the performance of computer systems. Machine learning in business and manufacturing is enabling organizations to achieve notable strides. These strides include increased performance and efficiencies, improved processes, and enhanced security.

This article will discuss the benefits of machine learning in business and its use cases.

Remarkable Benefits of Machine Learning for Businesses

According to Fortune Business Insights, the global market size for machine learning in business is expected to grow to USD 209.91 billion by 2029, exhibiting a CAGR of 38.8% during the forecast period. ML has been and continues to scale operations tremendously. Across industries, ML has led to a boom in affordable data storage and faster and more reliable computational processing.

Here are six remarkable benefits of machine learning in business:

1. Automation for better decision-making

Most businesses find themselves wasting precious time sorting through duplicate and inaccurate data. Such businesses benefit from using the predictive modeling algorithms of ML in their processes. Such a process will understand duplicate inaccurate data and distinguish the anomalies. It enables the organization to avoid inaccurate reporting that can result in poor customer retention.

Instead, this will allow businesses to use their accurate database to detect wasted costs, missed opportunities for sales, and revenue capital. In addition, organizations can overcome challenges and risks that arise due to miscommunication or poor performance metrics. Thus, businesses can streamline their operations and improve decision-making which could be translated into better ROI.

2. Increased scalability with minimum expense

Semi-supervised machine learning algorithms can help organizations leverage useful insights from customer profiles and enable them to view their brands from customers’ perspectives. Doing so will equip organizations with relevant insights to build their brand by improving their products and services.

3. Predictive maintenance

Predictive maintenance that ML aids manufacturing firms’ power to follow best practices that lead to efficient and cost-effective operations. The historical and real-time data predict problems and stipulate strategies to solve those problems. Plus, workflow visualization tools can eliminate issues and unwanted expenses incurred due to those issues.

4. Financial analysis

ML can greatly assist as it gathers and analyzes large volumes of quantitative and accurate historical data. It is used for portfolio management, loan underwriting, fraud detection, and more.

5. Personalization

Using machine learning in business will allow organizations to know their customers better and provide them with a more personalized customer experience. Organizations no longer need to rely on guesswork because ML models can process different types of information collected from numerous sources and provide relevant data about their customers.

6. Cybersecurity

ML technologies can improve cybersecurity to solve cyberattacks once and for all. Empowered by ML, intelligent security programs can gather and process data about cyber threats and respond to them in real time. ML models can detect the slightest deviations in patterns and flag them. Or destroy an attack in its nascent stage.

Read more: Can Machine Learning Predict And Prevent Fraudsters?

Top Use Cases

Machine Language has made its mark across industries and found a place in many different applications. Here are some top use cases:

1. Enhanced social media features

Businesses can use machine learning algorithms to create attractive and effective social media features. For example, ML algorithms in Facebook enable it to identify and record a person’s activities. These activities include records of chats and the amount of time that person spends on each post. It uses this data to determine what kind of friends and topics may interest that person and accordingly make suggestions.

Read more: Why Time Series Forecasting Is A Crucial Part Of Machine Learning

2. Product recommendation

Product recommendation is an advanced application of machine learning techniques. It has been the most popular application of almost every eCommerce website today. This technique allows websites to track a consumer’s behavior based on their previous purchases, search patterns, and cart history. It enables the website to make apt product recommendations to that consumer.

3. Recognition

Image recognition is one of the most significant and notable ML and AI techniques. It is adopted further for pattern recognition, face detection, and face recognition.

4. Sentiment analysis

Sentiment analysis is a real-time ML application. It determines the emotion or opinion of the speaker or the writer. For example, a sentiment analyzer can detect the thought and tone of a written review or an email. It can analyze the review-based website, decision-making applications, and more.

5. Access control

Most large businesses are actively implementing ML models to determine the level of access an employee should be granted. This application of machine learning can ensure the security of the organization.

6. Bank Domain

Banks are using ML to prevent fraud and protect accounts from hackers. Machine learning algorithms determine what factors to consider in creating a filter to prevent an attack.

Read more: Machine Learning – Deciphering the most Disruptive Innovation

How Fingent Can Help with Deploying the Best of ML

Leveraging the capabilities of machine learning in business can open the door to many opportunities. It is wise for any organization to take advantage of ML rather than lag behind competitors. However, we understand if you have questions. That’s why Fingent software development experts are here to help you. We can deploy the best machine-learning models efficiently and smoothly.

As a partner, Fingent can work with your team as you take on digital initiatives for sustainable business growth. We enable our clients to make data-driven decisions by efficiently deploying machine learning in business. Our cost-effective services will save you a considerable amount of time and money.

Furthermore, we do not follow a one-size-fits-all strategy. We provide custom software development services that cater to your needs. Therefore, look no further if you are looking for a reliable, efficient IT partner to deploy the best machine-learning models.

Stay up to date on what's new

Featured Blogs

Stay up to date on

what's new

Talk To Our Experts

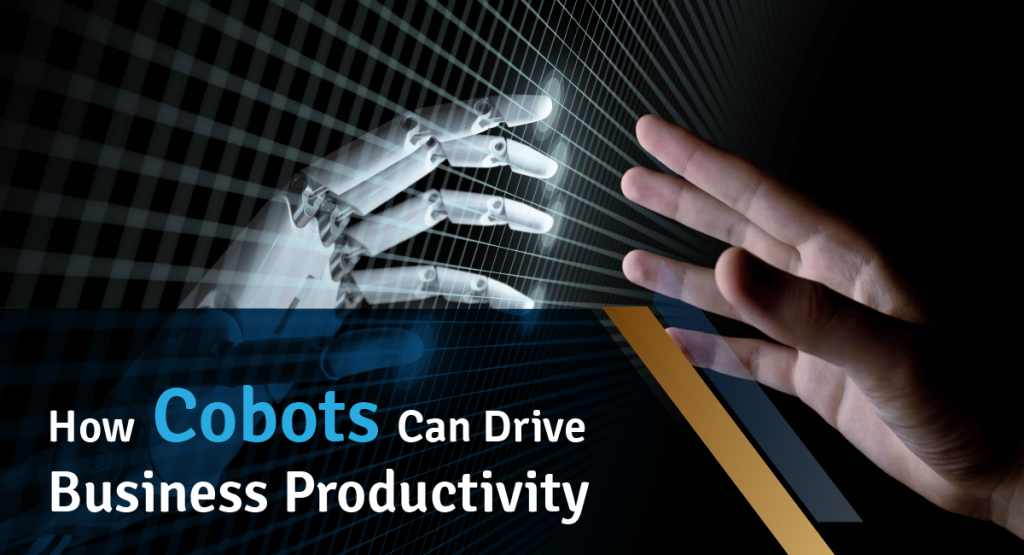

The COVID-19 pandemic has accentuated the need for resilient supply chains and human-machine collaboration at work. Full or partial shutdowns, as well as social distancing regulations, impose factories and workspaces to operate with the minimal onsite crew. Despite labor shortages, supply chain disruptions, and other production challenges, manufacturers are under constant pressure to respond to the evolving market needs. The demands for mass customization, quality expectations, faster product cycles, and product variability are at an all-time high. Tackling these persistent challenges requires combining human skill and ingenuity with the strength and speed of robots. To bring the best of both worlds – human creativity and robotic precision – manufacturers should adopt cobots (collaborative robots) that can reduce human interaction in feasible situations and accelerate production cycles.

Cobots allow manufacturers to maximize production and address the changing demands while ensuring the safety of their employees, clients, and partners. Why are cobots the future of manufacturing? How do they help build manufacturing resilience? Let’s explore further in this blog.

Read more: What are cobots and how can they benefit industries

Cobots Enhance Manufacturing Efficiency

Collaborative robots or cobots are designed to safely work alongside humans in tedious, dull, and hazardous environments. Unlike the traditional industrial robots that work in fenced premises to avoid close proximity with people, cobots operate in a shared workspace alongside human labor. For instance, a robot that helps humans sort foam chips in a lab is a cobot, while a robot welding a sharp cutting tool in a restricted factory area is a typical industrial robot.

Conventional industrial robots have long enabled manufacturers to leverage automation and compensate for labor shortages, but they are typically designed to execute one specific task. Moreover, they lack the cognitive capabilities possessed by humans to reprogram their operations based on new circumstances. In contrast, cobots don’t require heavy, pre-programmed actuators to drive them. Cobot motions are steered by computer-controlled manipulators, such as robotic arms, which are supervised by humans. Thus, cobots facilitate effective human-machine collaboration at work.

Cobots can be programmed to perform a wide range of tasks in a factory setting such as handling materials, assembling items, palletizing, packaging, and labeling, inspecting product quality, welding, press-fitting, driving screws and nuts, and tending machines. While cobots attend these mind-numbing jobs, human workers can focus on tasks that require immense resourcefulness and reasoning.

Read more: Digital Transformation in Manufacturing

Benefits of Cobots in Manufacturing

Modern manufacturing requires effective human-machine collaboration to cut expenses, reduce time-to-market, and address growing customer demands. Here’s how cobots empower manufacturing enterprises.

1. Easy to Deploy and Program

It takes days and weeks to install and program a traditional industrial robot. A cobot, on the other hand, can be set up in less than an hour. They are lighter than conventional robots. With user-friendly mobile applications and customized software, you can swiftly program the cobot to get started. Right software configurations enable cobots to learn new actions, without any specialized training. Using intuitive 3D visualizations or simple graphical representations, you can move the robot arm to preferred waypoints. Your employees can focus on more critical tasks while the cobot takes care of mundane jobs.

2. Flexible to Perform Different Tasks

Cobots can be easily shifted from one workstation to another due to their flexible hardware. With minimal software customizations, cobots can be re-deployed or repurposed to perform different functions across various departments. For example, a cobot that performs picking and packing can be re-programmed as a filler by replacing its robotic arm with a tube and nozzle.

Read more: Challenges, Opportunities, and Technologies That Will Revolutionize Manufacturing

3. Save Production Cost and Time

A study conducted by the World Economic Forum in association with Advanced Robotics for Manufacturing found that collaborative robots can cut nearly two-thirds of the cycle time required to pack boxes onto pallets. Because cobots are designed to work without any breaks, they reduce the idle time between cycles. The International Society of Automation reports that cobots can save production costs by reducing 75% of manual labor. Traditional robots increase the installation costs for manufacturers as they need to set up additional safety measures around the deployment area. Cobots don’t incur such extra expenses as they can be set up in close proximity to humans.

4. Improve Employee Engagement and Productivity

Cobots work in collaboration with people to refine and process the tasks better. They can never replace the human touch in production. When cobots take care of repetitive tasks such as screwing a bottle or packing medical equipment, employees can focus on more important functions such as running quality checks or inspecting a worksite. It allows manufacturers to optimize their productivity and boost employee morale. Businesses can also prepare their workforces to learn new skills.

5. Maintain Consistency and Accuracy

From the first to the hundredth task, cobots maintain the same level of accuracy and consistency. Humans can get drained easily, whereas a cobot never deviates from the actions for which it is set up. This helps ensure high product quality and uniformity. With the right software and hardware configurations, cobots can produce more finished goods at an incredible pace, faster than handcrafting.

Cobots and The Future of Manufacturing

Industry 4.0 paved the way for automation and smart manufacturing powered by data-driven technologies such as IoT, cyber-physical systems, wearables, AR, cloud computing, artificial intelligence, cognitive computing, and so on. Though the sole focus of Industry 4.0 is to improve process efficiency through physical and digital integration, it accidentally ignores the significance of human value in process optimization. Industry 5.0 re-shifts its focus on human value by fusing the roles of mechanical components and human workers in production. This makes cobots the very foundation of the next wave of the industrial revolution, that is, Industry 5.0.

Denmark-based Universal Robots reports that cobots are at the heart of Industry 5.0. Cobots democratize robotic capabilities, thereby serving as a personal tool that can be leveraged by any member of the workforce to apply creative skills and generate more value. Cobots can be used as a plug-and-play solution across a variety of manufacturing and industrial operations such as automotive production, food processing, chemical plants, medical devices, and kits, among others.

Since they collaborate well with humans in a safe environment, cobots will:

- augment intelligent decision-making,

- drive high-quality products to the market,

- enable mass customization and personalization,

- optimize manufacturing costs,

- generate new job roles (eg: Chief Robotics Officer), and

- boost virtual education to make the most of collaborative robotics.

Read more: How Custom Software Development Helps Manufacturing Industry

How We Help Manufacturers Leverage Cobots and Other Emerging Technologies

As technology matures, manufacturing enterprises need to build use cases that prove the inevitability of human-robot collaboration. We help develop POCs and use cases that demonstrate how your business can benefit from cobots. Our experts can develop your cobot management software or mobile app from scratch or customize your existing software to address the evolving market demands. Fingent custom software development company can work along with your cobot hardware manufacturers to develop a robust software orchestration layer that can control the movement of your cobots. We also simplify the training process to help you get started in no time.

Stay up to date on what's new

Featured Blogs

Stay up to date on

what's new

Talk To Our Experts

Better businesses need better cyber security.

Regrettably, threats to cyber security have become the new norm across public and private sectors. The pandemic affected all types of businesses. If anything, uncertainties around remote working amplified cybercrime. As a result, cyber security’s importance has become more clear now than ever before.

As cyberattacks become more sophisticated, businesses will have to stay one step ahead. Security professionals need strong support from advanced technologies like Artificial Intelligence (AI) to protect their companies from cyber threats.

AI can enable security teams to handle greater and more complex threats than ever before. More specifically, it has proven to identify and prioritize threats. In some cases, AI has even taken automated action to quickly remediate security issues. This article considers how AI can redefine the cyber security needs of an organization.

Before we discuss further, let’s find out the impact cyberattacks can have on businesses.

How Cyberattacks Affect a Company’s Performance and Value

Protecting a company against cyber threats is costly. It can impact the relationship between your company and your customers.

Unfortunately, cyber threats are never static. Millions are created each year and are becoming more and more potent.

In a survey conducted by Hiscox-Cyber Readiness Report, 28% of the businesses that suffered attacks were targeted on more than five occasions in 2020. Companies have lost millions to such cyber security breaches. Companies like financial services, technology, and energy were hit the hardest.

That is not all. Cyber security breaches have caused several other damages including:

- Outlays such as insurance premiums and public relations support.

- Operational disruption.

- Altered business practices.

- Business’s standing and customer trust.

- Stolen intellectual property including product designs, technologies, and go-to-market strategies.

- Legal consequences.

Read more: Quantum Vs Neuromorphic Computing – What Will the Future of AI Look Like!

How AI Contributes to Cyber Security

Cyber threats are real and certainly worrisome to businesses. It is important to protect critical digital assets.

However, it takes planning and commitment of resources. With good security operations, you can stay on top of most of the most serious cyber threats. True, there may be smart thieves, but Artificial Intelligence can provide smarter security.

Here are 5 specific ways AI can contribute to cyber security:

1. Robust Zero-Day Malware Detection

Malware is unpredictable. And signature-based tools will not detect attacks that have never occurred before. Given that, is it possible to defend against something unpredictable? Yes!

AI is cable of grasping all the possibilities and finding relationships that traditional security tools would miss. While traditional security strategies have their place in cyber security, they are insufficient to detect and prevent zero-day attacks.

Zero-day attacks are best detected by automatically identifying aberrant behavior and alerting administrators immediately. AI can enable organizations to be more proactive and predictive with their security strategies.

Artificial Intelligence provides visibility and security for an organization’s entire data flow. AI helps organizations gain such visibility by dismantling each incoming file to search for any malicious elements. Simultaneously, it also looks at the user and network behavior and anomalies from expected activities.

Together with ML, AI adapts its behavior to new network conditions, constantly adapting to evolving security conditions. Even those hackers who use modern ML penetration methods cannot be fool AI-enabled cyber security.

We cannot stop security breaches from happening. But Artificial Intelligence helps organizations avoid potential disruptions before attackers wreak havoc.

2. AI Can Safeguard Large Amounts of Data

Whether a company is small or mid-sized, there is a lot of data exchanged between customers and the company every day. This information must be safeguarded from potential cyber threats. Cyber security experts cannot always inspect all the data for potential threats.

AI is the best option to detect threats to routine activities. Because of its automated nature, AI can sift through large amounts of data in real-time and identify any hazards lurking amid the chaos.

Read more: Artificial Intelligence and Machine Learning – The Cyber Security Heroes Of FinTech!

3. AI Takes Care of Redundant Cyber Security Operations

Hackers constantly modify their methods but the fundamental security practices do not change. Plus, they may weary your cyber security worker.

Artificial Intelligence takes care of redundant cyber security operations while imitating the best of human traits. It also does a thorough analysis of the network to locate security flaws that may harm your network.

4. AI can boost response time

Ideal security is the one that can detect security threats in real-time. The principle of ‘a stitch in time saves nine’ applies here.

Integrating AI with cyber security measures is a sure way to detect and respond to attacks immediately. Unlike humans, AI does not miss a spot when examining your system for risks. Besides, it can detect risks early, thereby boosting response time.

5. Authenticity Protection

Most websites allow users to log in and access services or make purchases. You will need greater protection as such a site contains private information and sensitive material. To maintain customer trust, it is important to ensure all data about your guests remains safe while accessing your site.

Artificial Intelligence can provide an enhanced security layer. AI can secure authentication when a user wishes to connect their account. Login measures like CAPTCHA, fingerprint, and facial recognition are used to determine if the attempt is legitimate or not.

Read more: Safeguarding IT Infrastructure from Cyber Attacks – Best Practices

Do Not Be Afraid!

Fingent is your reliable security partner. We provide professional security with reliable service. As a proactive security partner, we look ahead to ensure your business is successful far into the future.

Using AI’s real-time monitoring capabilities, we can spot potential issues before they become a major problem. Security experts Fingent are aware that cyber security threats are not limited to work hours. Our professionals here at Fingent software development experts will be there for your business whenever you need us.

We are in business today because of the reputation we built with our customers. We offer a unique level of enterprise IT support, and our clients can rest easy knowing that their business is always protected.

Give us a call and let’s discuss your security needs.

Stay up to date on what's new

Featured Blogs

Stay up to date on

what's new

Talk To Our Experts

Businesses are always on the lookout for ways to optimize processes and gain greater visualization. When processes work efficiently, the output of the product is higher. This leads to workflows that run smoothly with minimum errors and higher capacity. A good reason for the growing popularity of process automation and visualization.

Automation and visualization are the future of the business strategy. Gone are the days of carefully filling in graph paper by hand. Today, process automation and visualization help enterprises up their game by allowing access to real-time models capable of accurately capturing the nuanced data sets.

In this blog, we will expand on how enterprises can up their game with process automation and visualization.

How Enterprises Can Up Their Game with Process Automation and Visualization

Data visualization enables human operators to manage vast sets of data, glean insights from different information sources, and perform operations more intuitively and strategically.

In the current data-immersed world, data visualization can significantly add value by conveying large datasets visually. What does this mean for your business? This means a better grasp of critical customer data.

According to the IDC, the collective sum of the world’s data is predicted to grow to 175 zettabytes by 2025. Processing such large amounts of data can become a problem.

By allowing automation and the right programs to sort out your business data, you can generate graphs. You will be able to use these graphs to up your game in business competition.

Data visualization offers businesses the hope of getting a grasp on data. Fortunately, the human brain can process and recognize trends, identify potential issues, and forecast future development from clear visual displays.

Read more: How Powerful Is Data Visualization With Tableau

Look Out for Upcoming Powerful Trends in Automation and Visualization

1. AI and ML

Artificial Intelligence and Machine Learning render visualization more accurate and efficient. These technologies enable businesses to handle customer feedback without bias. Process automation allows you to sort the feedback in real-time and according to your specifications.

2. Unlock Big Data with Data Democratization

Large amounts of data are hard to understand. It requires data scientists and other experts to unlock its treasures. Not anymore. Advanced no-code data analysis platforms can automate your data process. This is called the democratization of data.

Democratization of data leaves it malleable and easy to display allowing your employees any level of tech support. When this is paired with the data visualization type, it can unlock big data results for teams at all levels of your organization.

3. Video Visualization Is Here to Stay

Young and old alike tend to retain the information they see over what they hear. This would mean that video infographics will be the future.

Video applications for business strategy and customer retention are key areas for future strategic data visualization implementation.

4. Real-time Visualization for Early detection

Knowing a problem at the exact moment it arises can assist businesses in customer retention and brand presence. Early detection can have a dramatic impact on the bottom line.

Process automation can help run a dashboard that allows users to submit their error reports to your customer support. Then the reviews can be tagged and analyzed using sentiment analysis.

5. Mobile Optimized Visualization

An increased number of people access the internet on their mobile devices. Your business needs mobile-optimized data visualization to stop customer churn.

It enables you to know if your potential customers are learning about your services through social media or an online review board. Though mobile-optimized visualization is an easy step, it is critical to keep your business on top of the game.

Read more: 7 Awesome Data Visualization Tools

Business Applications of Process Automation and Data Visualization

1. Financial Service and Insurance

The finance service industry is a prime candidate for process automation and data visualization. Two top requirements of this industry are customer response time and compliance with strict regulations.

When automated, quick decisions can be made based on pre-defined rules like loan applications, claims processes. businesses can use data visualization to make reliable predictions or risk calculations in the financial industry.

Insurance fraud can cost billions of dollars damage. Process automation and data visualization can improve fraud detection.

Read more: Deploying RPA for Finance, Healthcare, and IT Operations.

2. Distribution and Logistics

Process automation and data visualization can minimize costs by planning transport promptly, reducing costs of downtimes and maintenance.

3. Sales

Data visualization can greatly improve relationships with your customers. It helps you know the needs of your customers better, and address each of them directly in real-time.

4. Marketing

Data visualization and process automation can reduce marketing costs substantially. These technologies can help evaluate the demographics, location, transactions, and interests of your customers. Visualizing these details can help you understand their purchase patterns.

Thus, data visualization can be used to create and target new customer segments. Cross-selling is another advantage. At the same time, data visualization may reveal that customers are dissatisfied. Identifying this and responding quickly can counteract the situation to retain your customer base.

5. Healthcare

Process automation and data visualization enable cheaper healthcare. It can help predict disease occurrence and proactively propose countermeasures.

6. Science and research

Visualization enables the evaluation of the data of an experiment. Process automation and visualization can be advantageous especially when an experiment generates large amounts of data within seconds.

7. Production

Large amounts of data are generated during production. Using process automation and visualization can help plan preventive maintenance and prevent production delays or downtimes.

Prepare Your Business For The Future With Fingent

Fingent helps enterprises automate document-based processes. We can help you create safer sharing and collaboration. Our platform allows you to create teams, assign roles and privileges, and streamline communication.

Fingent’s partner integrations allow you to use it together with your existing software. Our top-level measures protect our users’ data. The encryption we provide ensures content integrity and prevents alteration.

Fingent top custom software development company can help your organization reach the goal of paperwork elimination. Doing so can lead to efficient resource distribution throughout the organization.

What’s more, it reduces carbon footprint. Our experts bring along specializations supported by scientific rigor and in-depth knowledge of advanced techniques to design, develop, and deploy solutions for process automation and visualization.

Give us a call today and let’s get talking.

Stay up to date on what's new

Featured Blogs

Stay up to date on

what's new

Talk To Our Experts

“The human language, as precise as it is with its thousands of words, can still be so wonderfully vague.” – Garth Stein, American author.

Human language is unique and complex. Encoding human language is considered a difficult task. For starters, you can arrange words in infinite ways to form a sentence. Also, each word can have several meanings. Training machines to communicate as humans do is a gigantic task. In recent years, Natural Language Processing (NLP) enabled computers to understand human language and respond sensibly. It is the intersection of computer science, linguistics, and machine learning.

NLP is the driving force behind technologies, like virtual assistants, speech recognition, machine translation, and much more. This blog discusses the importance and top benefits of NLP. It will also explain how businesses can foster the power of NLP.

Importance of NLP

Machine translation is the most widely used NLP application. NLP methods enable the machines to understand the significance of sentences, thus improving the effectiveness of machine translation.

NLP is important for businesses as it can detect and process massive volumes of data across the digital world, including social media platforms, online reviews, and others. Also, NLP technology is able to offer businesses valuable insights into brand performance. It can also detect any persisting issues and take necessary risk mitigation measures to improve performance.

NLP can achieve all of this by training machines to understand human language faster, more accurately, and in a reliable way. Because NLP can monitor and process data consistently, brands can remain updated with their online presence.

NLP is a valuable asset for sentiment analysis as it helps businesses recognize the sentiment of their customers from different online posts and comments.

Top Benefits of NLP and How Businesses Can Foster the Power Of NLP

1. 24/7 Go-To Customer Support

AI-based chatbots use NLP to help customers get immediate answers to any query 24/7. What if the queries focus on the same themes? Chatbots can play the role of a customer service executive by using predefined answers. They can even provide specific support like booking a service or finding a product. Thanks to NLP techniques that analyze specific words, chatbots can now detect a user’s intention.

We live in an era where a person looks for an immediate answer to his question. If not, he switches to a competitor. Chatbots are the perfect solution! They can even convert prospects into a customer by providing ultra-attentive service.

Read more: 5 Leading NLP Platforms that Support Chatbot Development

2. Save Resources

A business is successful when it begins making a profit. It was predicted that NLP-powered chatbots will save businesses over $8 billion per year. It can free up employee time, allowing them to focus on the creative tasks that engage customers and boost your bottom line. What’s more, NLP-based software can be improved automatically, often without investment.

3. Moderate Content on Websites

Businesses can use NLP technology to enforce spam filters and block unwanted content on websites and in email. It enables you to maintain your forum’s quality while avoiding unsolicited advertising, controversies, or backlinks to malicious sites.

Furthermore, the NLP model can even detect hate speech. How is that possible? Advanced NLP models can learn to read the context of a sentence and classify it into the appropriate group. By using the appropriate token, the NLP model can read a user’s emotions. This unique technology allows you to tailor the solution to a specific audience or place, as the language may differ from place to place.

4. Boosts Conversion Rate

Converting site visitors to customers is the key for businesses and NLP solutions are experts in conversion optimization. Along with the chatbots, features like auto-complete text and more advanced search functionality aid in conversion optimization.

5. Enhances End-User Experience

As you may have already noticed from the above points, NLP technology improves the end-user experience. The growth of your business depends on your users having positive experiences throughout their customer journey. And all these factors have an impact on whether they will talk to others about your products or services.

6. Empower Your Employees

NLP solutions are AI-based. So, NLP software can handle the most time-consuming, routine tasks like answering repetitive questions, scanning documents for keywords and filtering them, spell checks, and many more. When these tasks are automated, you will see employee satisfaction shoot up, job engagement improve.

7. Search Engine Optimization

Every business wants to rank high. NLP solutions can analyze search queries to identify and suggest related keywords. Doing so saves time spent on research, and you will be able to better target customers and create more focused campaigns. As a result, your company will become more visible.

How NLP Is Transforming The Future

NLP regulations are advancing speedily like never before. Experiments are conducted to help the bots remember details associated with an individual discussion. We are likely to reach the developing level shortly. Though NLP technology is far from perfect, it is definitely getting harder to tell whether we are talking to a human or a machine.

We are living in an era where machines can communicate with humans. Hence, NLP utilization possibilities are endless. Businesses are expected to reap are staggering future benefits. NLP market size is anticipated to reach 35.1 billion by 2026 at a CAGR of 20.3% during the forecast period. As seen above, NLP improves the quality of life by a significant margin. As the world revolves around automation, we can expect NLP to affect more and more aspects of our lives.

Read more: Using Technology to Build Customer Trust: Your Business Plan for 2022!

How Can Fingent Help Deploy NLP?

As businesses increasingly talk to their customers in their own language, the demand for NLP modules is steeply rising. In a world filled with time-pressed shoppers, businesses are expected to get things right the first time. NLP can get you there.

Fingent top custom software development company helps businesses master e-commerce personalization with content, discovery, and engagement. We can help businesses with their use of chatbots or automation for sales, customer support, brand engagement, and human resources. Fingent can also provide a host of apps and analytics dashboards for the holistic management of multilingual customer interactions. As you can see, the possibilities are endless.

Let’s talk and identify which powerful features of NLP your business will benefit most from!

Stay up to date on what's new

Featured Blogs

Stay up to date on

what's new

Talk To Our Experts

Supercharge Your Business with These 11 Hot Tech Trends

Technology is having an ever-greater impact on our personal lives and most importantly on the way we do business. The business world has transformed rapidly in the past few years and it will evidently continue to change the pace of business in the years to come. Whether it is the production of goods or the computing devices used at the office, new technologies have helped businesses run smoother and more effectively. As information travels more quickly and reliably, businesses are realizing how easy it is to grow globally and across multiple sectors. To gain benefits, however, businesses must keep up with technology and adopt new trends. This article discusses 11 tech trends to look forward to in the next couple of years. First, let us understand what drives these tech trends, and then we will consider their impact on businesses.

What Will Drive the Evolution of Tech Trends?

Two major reasons for the evolution of all kinds of tech trends are our day-to-day business challenges and the passion for innovation. As an intelligent being, it is normal for human beings to innovate to live better. However, from a business standpoint, it is about optimizing all that humans are capable of accomplishing and that includes making profits.

1. Greater predictive levels and programmability will reshape cybersecurity

Cybersecurity will be one of the prominent IT functions to mature. New technologies will bring about a fundamental shift in cybersecurity as they enable greater predictive capabilities and programmability. It will become more predictive with the use of large-scale data along with AI, analytics, and machine learning. As a general rule, the more we have, the more difficult it becomes to protect it from thieves. That is not the case with immense data accumulation. Even as data increases it will become easier to determine and match patterns to both predict and shut down any attack vectors.

As every element of infrastructure becomes programmable, you can put a firewall inside the software of all virtual machines in your architecture, thus limiting the flow of data within the software. This will eliminate the need of storing data on a network from where the data can easily be hacked or stolen. Businesses will continue to see this capability emerge as programmability improves.

Read more: Safeguarding IT Infrastructure From Cyber Attacks – Best Practices

2. Promise of serverless computing

According to IDC’s prediction, “from 2018 to 2023 — with new tools/platforms, more developers, agile methods, and lots of code reuse — 500 million new logical apps will be created, equal to the number built over the past 40 years.” Currently, we are witnessing a distinct change in the application infrastructure with most businesses moving to cloud-native applications.

There are four distinct computing models that are evolving simultaneously such as virtual servers, physical servers, container-based computing, and serverless computing. Virtual servers and container-based computing make it easy to move applications. Whereas the promise of serverless computing offers greater agility and cost savings as the applications do not need to be deployed on a server. Alternatively, functions can be run from a cloud provider platform. It can return outputs and instantly release the associated resources. As businesses see a change in the infrastructure, they will have to make choices about how they approach development.

Read more: 5 Trends That Will Transform Cloud Computing in 2020

Accelerated in part by the long-term shutdown due to COVID-19, industries that design and manufacture products will quickly adopt cloud-based technology trends to aggregate and intelligently transform. In a couple of years, these intelligent algorithms will allow manufacturing assembly lines to optimize towards increased levels of output and enhanced product quality thereby reducing the overall waste in manufacturing by half.

3. IoT and Edge

IoT and Edge can rightly be termed as superpowers of the tech world. The developing, managing, and running of widespread IoT and Edge applications will grow in complexity with numerous endpoints. For example, audiovisual technologies are being used to achieve the same input as you would get when you connect numerous IoT sensors. When compared to individual sensors, these tech trends provide reasonable mass coverage at a minimum cost. This is only possible when AI and ML are integrated into the IoT platform. Powering and maintaining thousands of sensors is a daunting prospect. Audiovisual solutions can thus make this a significant growth area. As a result, these changes will have a profound impact on how the businesses get value out of new data that they are able to collect and process.

Read more: Gearing up for IoT in 2020

4. Everything will revolve around data

Enormous amounts of data collected from IoT devices and digital platforms can now be made available through application programming interfaces for business insights, analysis and to develop other applications. Collecting information from a sufficient amount of data-points enables you to model behavior and understand patterns and come to more accurate conclusions quickly with minimum cost. With abundant data from multiple touchpoints and new analytic tools, businesses are able to customize products and services by creating ever-finer consumer microsegments. Businesses that do not build around data will find themselves swamped by its enormity.

5. Voice control is the next evolution of human-machine interaction

The advent of voice technology such as Apple’s Siri, Google’s Assistant, and Amazon’s Alexa is disrupting businesses as it creates a three-way interaction between devices, services, and people. It has completely changed the way consumers interact with smart devices.

According to recent research, by 2024, the global voice-based smart speaker market could be worth $30 billion. This technology trend has a huge impact on how online searches will happen. Businesses will have to adapt their way of promoting their products and services. It will also affect the way companies are organized as internal knowledge can be shared more easily which improves the possibility of multitasking. This will result in increased productivity.

In the next couple of years, we will see a transformation of voice technology from being an information tool to a transaction tool. It offers the possibility to directly order from brands and perhaps even pay.

Read more: 6 Key Predictions for AI-Driven Voice Computing in 2020

6. Blockchain platform market

Blockchain started as an offshoot of the cryptocurrency movement. Now, it is evolving to find use cases beyond just the international settlement areas. According to Gartner, Blockchain will grow to slightly more than $176 billion by 2025 and continue to exceed $3.1 trillion by 2030. The marriage of Blockchain and Artificial Intelligence can significantly change the nature of transactional businesses. This is possible as Blockchain is a decentralized unchangeable space for encrypted data and AI will assist you in analyzing and interpreting that data quickly and reliably to drive actionable insights. There is great potential for this technology to have an immense impact on cybersecurity.

Read more: How Blockchain Enables the Insurance Industry to Tackle Data Challenges

7. Seamless blend of the digital twin

Applied technology will intersect between the physical and digital worlds, the digital twin. For example, the digital twin will have a perfect digital copy of the physical world. Applied technology will allow you to blend these two worlds seamlessly. The resulting immersive environments will have a pervasive impact on the industry. This twin will allow you to collaborate virtually, simulate conditions quickly, understand what-if scenarios clearly, predict results more accurately, and more. Most businesses are already aware of the benefits of applying the digital world to enhance the physical world. They are digitizing physical processes to reduce inconsistencies, redundancies, and human error.

Explore: Learn more about Digital Twin Technology

This pandemic has shown us that communication is not just for work but is required to form real emotional connections. In the next couple of years, AI technology will be used to connect people at a human level and drive them closer to each other. There has been a lot of concern over the security of video conferencing companies. However, these concerns will move companies to ensure that they provide secure digital connectivity for their consumers.

Being a secure video conferencing software, InfinCE has been a game-changer for enterprises of all sizes. Click here to explore

8. 5G will be the game-changer

5G has innumerable use cases beginning from healthcare to more reliable security. With 5G, the audio-video experience will be faster and clearer than it has ever been. On the flip side, 5G will enable businesses to provide remote opportunities for their employees with work experience that would be similar to that inside the office. It will bolster recruitment and retention efforts for top talent.

As more businesses move their critical tasks to the cloud, employees will become increasingly productive from wherever they work and with whatever device they use. Though currently, 5G coverage is limited, according to Ericson’s Mobility report, 5G subscriptions could cover up to 65% of the world’s population by 2025. Businesses that anticipate and embrace these emerging technology trends will see a positive impact in the years to come. Low latency 5G networks can help resolve the challenges caused by the absence of reliable networks and can facilitate more high-capacity services. Private 5G networks can offset the high cost of mobility with economy-boosting activities.

Read more: From Remote Work to Virtual Work, 5G is Reinventing the Way We Work

9. Data lakes enable new analytic models

Data lakes are storage repositories that contain quantitative and qualitative data. Data lakes enable new models of predictive analytics and help unlock the potential of digital twins. Since they can hold enormous amounts of data, organizations can leverage the insights, including discrete data points to create a ‘digital twin’ of each customer. You can gain access to customer details such as demographic data, browsing behavior, purchasing patterns, and payment preferences. The ability to gauge qualitative data will increase the demand for robust ERP systems and AI-driven automation. This would mean that businesses should acquire the skills to set up, manage, and secure their data lakes and build data models that will help extract the insights they require for ongoing innovation.

Read more: 7 Key Differences Between Data Lake and Data Warehouse

10. Sophisticated sentiment analysis for real-time insights

Sentiment analysis uses techniques to interpret and classify the ‘mood’ of your customers. Sophisticated sentiment analytical tools allow businesses to recognize the customers’ sentiment towards a product, a service, or a brand. It can also be used by the businesses to respond to the feedback with a proactive approach. It allows businesses to understand how people are feeling in real-time and proactively position products, services, and visual merchandise. In the future, this technology will be used in addition to tools such as conversation intelligence, text analysis, and natural language processing. It can enable innovation on demand. Businesses will find it advantageous to incorporate sentimental analysis into their data analysis in the areas of customer feedback, marketing, CRM, and e-commerce.

Read more: CTOs Guide – How Robotics and AI Can Improve Customer Experience

11. Micro-fulfillment for e-commerce fulfillment

Robotics has turned around numerous industries except for a few sectors such as grocery retail. With the new robotic application termed micro-fulfillment, grocery retailing will no longer remain the same. Micro fulfillment allows you to convert personal garages into storage spaces and can operate 5-10% more economically than a brick and mortar store. This rising trend is captured in tiny, urban warehouses that leverage high-end automated systems to complete online orders with greater efficiency. These centers are used to deliver goods rapidly, in as fast as an hour. Robotic arms can be used to pick up items. The application of robotics downstream at a ‘hyper-local’ level will disrupt the grocery retail industry. This technology trend will unlock wider access to food and a better customer proposition such as product availability, speed, and cost.

Read more: 5 Transformative Trends Ushered by B2B E-commerce in Healthcare and Life Sciences

How Technology will Continue to Disrupt Businesses?

The transformative potential of innovative technology trends is exciting businesses today. It will change the way businesses plan, start, manage, operate, market, and make a profit. The next couple of years will see profound improvements in addressing most business challenges as organizations develop and deploy solutions that will deliver tangible results. Driverless cars, 3d printing, artificial and business intelligence tools, robotics, and IoT are just a few examples of how technology has transformed or disrupted the business world and has the potential to continue to disrupt.

The COVID-19 pandemic has necessitated worldwide collaboration, transparency of data, and speed at the highest levels to navigate the human and business impacts. Now is the time to recognize and support the opportunities for technology trends that can best and most rapidly address business challenges. Partner with us to capitalize on these trends and scale your business quickly.

Stay up to date on what's new

Featured Blogs

Stay up to date on

what's new

Talk To Our Experts

How Time Series Analysis Enables Businesses to Improve Their Decision Making

- Introduction

- Definition of Time Series

- The 5 Most Effective Time Series Methods for Business Development

- Time Series Regression

- Time Series Analysis in Python

- Time Series in Relation to R

- Time Series Data Analysis

- Deep Learning for Time Series

- Benefits of Using Deep Learning to Analyze Your Time Series

- Time Series is Valuable for Business Development

Introduction

Time series analysis is one of the most common data types encountered in daily life. Most companies use time series forecasting to help them develop business strategies. These methods have been used to monitor, clarify, and predict certain ‘cause and effect’ behaviours.

In a nutshell, time series analysis helps to understand how the past influences the future. Today, Artificial Intelligence (AI) and Big Data have redefined business forecasting methods. This article walks you through 5 specific time series methods.

Definition of Time Series

Time series is a sequence of time-based data points collected at specific intervals of a given phenomenon that undergoes changes over time. It is indexed according to time.

The four variations to time series are (1) Seasonal variations (2) Trend variations (3) Cyclical variations, and (4) Random variations.

Time Series Analysis is used to determine a good model that can be used to forecast business metrics such as stock market price, sales, turnover, and more. It allows management to understand timely patterns in data and analyze trends in business metrics. By tracking past data, the forecaster hopes to get a better than average view of the future. Time Series Analysis is a popular business forecasting method because it is inexpensive.

Read more: Why Time Series Forecasting Is A Crucial Part Of Machine Learning

The 5 Most Effective Time Series Methods for Business Development

1. Time Series Regression

Time series regression is a statistical method used for predicting a future response based on the previous response history known as autoregressive dynamic. Time series regression helps predictors understand and predict the behaviour of dynamic systems from observations of data or experimental data. Time series data is often used for the modeling and forecasting of biological, financial, and economic business systems.

Predicting, modeling, and characterization are the three goals achieved by regression analysis. Logically, the order to achieve these three goals depends on the prime objective. Sometimes modeling is to get a better prediction, and other times it is just to understand and explain what is going on. Most often, the iterative process is used in predicting and modeling. To enable better control, predictors may choose to model in order to get predictions. But iteration and other special approaches could also be used to control problems in businesses.

The process could be divided into three parts: planning, development, and maintenance.

Planning:

- Define the problem, select a response, and then suggest variables.

- Ordinary regression analysis is conditioned on errors present in the independent data set.

- Check if the problem is solvable.

- Find the correlation matrix, first regression runs, basic statistics, and correlation matrix.

- Establish a goal, prepare a budget, and make a schedule.

- Confirm the goals and the budget with the company.

Development:

- Collect and check the quality of the date. Plot and try those models and regression conditions.

- Consult experts.

- Find the best models.

Maintenance:

- Check if the parameters are stable.

- Check if the coefficients are reasonable, if any variables are missing, and if the equation is usable for prediction.

- Check the model periodically using statistical techniques.

2. Time Series Analysis in Python

The world of Python has a number of available representations of times, dates, deltas, and timespans. It is helpful to see how Pandas relate to other packages in Python. Pandas software library (written for Python) was developed largely for the financial sector, so it includes very specific tools for financial data to ensure business growth.

Read more: How Predictive Algorithms and AI Will Rule Financial Services

Understanding Date and Time Data:

- Time Stamps: Refers to particular moments in time.

- Time intervals and periods: Refers to a length of time between a particular beginning and its endpoint.

- Time deltas or durations: Refers to an exact length of time.

Native Python dates and times:

Python’s basic objects for working with dates and times are in the built-in module. Scientists could use these modules along with a third-party module, and perform a host of useful functionalities on dates and times quickly. Or, you could use the module to parse dates from a variety of string formats.

Best of Both Worlds: Dates and Times

Pandas provide a timestamp object that combines the ease-of-use of datetime and dateutil with vectorized interface and storage. From these objects, pandas can construct datetimeIndex that can be used to index data in dataframe.

Fundamental Pandas Data Structures to Work with Time Series Data:

The most fundamental of these objects are timetstamp and datatimeIndex objects.

- Time Stamps type: It is based on the more efficient numpy.datetime64 datatype.

- Time Periods type: It encodes a fixed-frequency interval based on numpy.datetime64.

- Time deltas type: It is based on numpy.timedelta64 with TimedeltaIndex as the associated index structure.

3. Time Series in Relation To R

R is a popular programming language and free software environment used by statisticians and data miners to develop data analysis. It is made up of a collection of libraries specifically designed for data science.

R offers one of the richest ecosystems to perform data analysis. Since there are 12,000 packages in the open-source repository, it is easy to find a library for any required analysis. Business managers will find that its rich library makes R the best choice for statistical analysis, particularly for specialized analytical work.

R provides fantastic features to communicate the findings with presentation or documentation tools that make it much easier to explain analysis to the team. It provides qualities and formal equations for time series models such as random walk, white noise, autoregression, and simple moving average. There are a variety of R functions for time series data that include simulating, modeling, and forecasting time series trends.

Since R is developed by academicians and scientists, it is designed to answer statistical problems. It is equipped to perform time series analysis. It is the best tool for business forecasting.

4. Time Series Data Analysis

Time series data analysis is performed by collecting data at different points in time. This is in contrast to the cross-sectional data that observes companies at a single point in time. Since data points are gathered at adjacent time periods, there could be a correlation between observations in Time Series Data Analysis.

Time series data can be found in:

- Economics: GDP, CPI, unemployment rates, and more.

- Social sciences: Population, birth rates, migration data, and political indicators.

- Epidemiology: Mosquito population, disease rates, and mortality rates.

- Medicine: Weight tracking, cholesterol measurements, heart rate monitoring, and BP tracking.

- Physical sciences: Monthly sunspot observations, global temperatures, pollution levels.

Seasonality

Seasonality is one of the main characteristics of time series data. It occurs when the time series exhibits predictable yet regular patterns at time intervals that are smaller than a year. The best example of a time series data with seasonality is retail sales that increase between September to December and decrease between January and February.

Structural breaks

Most often, time-series data shows a sudden change in behaviour at a certain point in time. Such sudden changes are referred to as structural breaks. They can cause instability in the parameters of a model, which in turn can diminish the reliability and validity of that model. Time series plots can help identify structural breaks in data.

5. Deep Learning for Time Series

Time series forecasting is especially challenging when working with long sequences, multi-step forecasts, noisy data, and multiple inputs and output variables.

Deep learning methods offer time-series forecasting capabilities such as temporal dependence, automatic learning, and automatic handling of temporal structures like seasonality and trends.

Read more: Machine Learning Vs Deep Learning: Statistical Models That Redefine Business

Benefits of Using Deep Learning to Analyze Your Time Series

- Easy-to-extract features: Deep neural networks minimize the need for data scaling procedures and stationary data and feature engineering processes which are required in time series forecasting. These neural networks of deep learning can learn on their own. With training, they can extract features on their own from the raw input data.

- Good at extracting patterns: Each neuron in Recurrent Neural Networks is capable to maintain information from the previous input using its internal memory. Hence, it is the best choice for the sequential data of Time Series.

- Easy to predict from training data: The Long short-term memory (LSTM) is very popular in time series. Data can be easily represented at different points in time using deep learning models like gradient boosting regressor, random forest, and time-delay neural networks.

Time Series is Valuable for Business Development

Time series forecasting helps businesses make informed business decisions because it can be based on historical data patterns. It can be used to forecast future conditions and events.

- Reliability: Time series forecasting is most reliable, especially when the data represents a broad time period such as large numbers of observations for longer time periods. Information can be extracted by measuring data at various intervals.

- Seasonal patterns: Data points variances measured can reveal seasonal fluctuation patterns that serve as the basis for forecasts. Such information is of particular importance to markets whose products fluctuate seasonally because it helps them plan for production and delivery requirements.

- Trend estimation: Time series method can also be used to identify trends because data tendencies from it can be useful to managers when measurements show a decrease or an increase in sales for a particular product.

- Growth: Time series method is useful to measure both endogenous and financial growth. Endogenous growth is the development from within an organization’s internal human capital that leads to economic growth. For example, the impact of policy variables can be evidenced through time series analysis.

Read more: An Introduction to Deep Reinforcement Learning and its Significance

We can help you get the best of Time Series Analysis to benefit your business. Reach out to us to understand more about our data analytics and machine learning capabilities and how it can help your business grow.

Run your service business from anywhere

ReachOut digitizes your service workflows in a centralized platform to help you manage scheduling, customers, invoicing, & more from your home or office.

Get StartedStay up to date on what's new

Featured Blogs

Stay up to date on

what's new

Talk To Our Experts

Understanding the concept and significance of Deep Reinforcement Learning

The field of reinforcement learning has exploded in recent years with the success of supervised deep learning continuing to pile up. People are now using deep neural nets to learn how to use intelligent behavior in complex dynamic environments. Deep reinforcement learning is one of the most exciting fields in artificial intelligence where we combine the power of deep neural networks to comprehend the world with the ability to act on that understanding.

In deep learning, we take samples of data and supervise the way we compress and code the data representation in a manner that you can reason about. Deep reinforcement learning is when we take this power and apply it to a world where sequential decisions are to be made.